Innovations such as high dynamic range imaging systems and the use of neural nets to recognize objects are expanding the use cases for bin picking.

HANK HOGAN, CONTRIBUTING EDITOR

Consider a robot performing bin picking, which involves recognizing randomly arranged objects, picking them up, and placing them in a new position. This process is essential in manufacturing, and its automation is often the goal.

There is a challenge, though, when replacing people with robots in bin picking, said Bernhard Walker, a senior engineer at automation and robot supplier FANUC America.

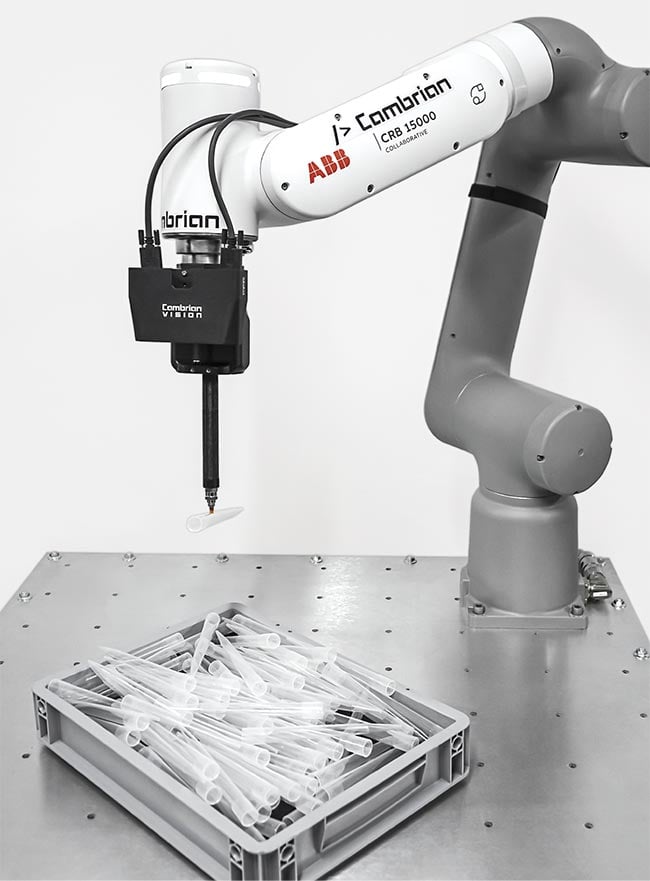

Bin picking performed using two standard industrial cameras (housed in a black enclosure) spaced a known distance apart to generate 3D information. Courtesy of Cambrian Robotics.

“Robots are terrible at it in some respects compared to humans,” he said.

Part of the reason that people excel is their ability to use touch when manipulating objects, Walker said. Human fingers outperform vacuum, magnetic, or simple open/close grippers.

FANUC equips its robots with its own proprietary vision systems and works with customer-supplied vision solutions. Based on this experience, Walker said another key advantage that people possess is their vision, because humans traditionally distinguish one object from another more effectively than a camera can.

Recent advancements in cameras, such as high dynamic range systems, are improving automated bin picking performance. At the same time, software innovations, such as neural net AI trained to recognize objects, are making it possible to automate bin picking of varied shapes, such as agricultural products, or to achieve good bin picking results with less expensive vision systems.

The standard approach to overcoming computer vision drawbacks is to use a 3D camera to generate a point cloud, a collection of x, y, and z coordinates of the detected surface of the object. The system compares this 3D data and a 2D image to a computer-aided design (CAD) model of a part to select a point to pick.

Shiny surfaces

However, many industrial objects that are bin picked are metallic while the rest of the objects are composed of primarily plastic. During their operation, 3D sensors often use projected light, such as a pattern of dots or lines. The sensors determine the distance to an object and its shape from reflections of the projected light.

Bin picking and disentangling metal hooks using AI software working with a 3D camera (top). Bin picking semitransparent medical bottles, enabled by high dynamic range sensors and AI software (bottom). Courtesy of CapSen Robotics.

Bouncing light off shiny surfaces results in bright spots, leading to distortions, artifacts, and missing data in 3D point clouds, said John Leonard, product marketing manager at 3D camera maker Zivid. The situation has changed in the last few years with the advent of high dynamic range sensors.

“We can take a capture down to the deep dark blacks in the absorbance, to the mid-range and over the top range. We can get over 80 dB of dynamic range with a single acquisition,” Leonard said. Thus, the cameras can image highly absorbent and mirror-like surfaces.

For comparison, standard dynamic range sensors are a third or half of the dynamic range of high dynamic range sensors. High dynamic range sensors rival the 90-dB change that the eye achieves in seeing objects in bright sunlight and perceiving them in starlight.

Better sensor performance pays dividends, Leonard said. Industrial robots often use pincher grippers or other designs with low error tolerance. This requires sub-millimeter accuracy on the location of surface points. Otherwise, the gripper may miss the target, leading to a failed pick or a damaged target.

Leonard said that temperature, lighting, vibration, and other environmental variables affect the performance of a camera in an industrial setting. Temperature, for example, can vary significantly due to furnaces, welding operations, and other heat sources. Zivid counters these effects through its system design and active cooling within the camera, according to Leonard.

Spatial intelligence

There have been significant advancements in 3D imaging, said Jared Glover, CEO of CapSen Robotics. The company’s machine learning-based software gives robot arms more spatial intelligence, allowing them to accomplish tasks, such as disentangling a pile of hooks. This feat only became possible a little more than five years ago, Glover said.

In solving this problem, CapSen used AI techniques, including machine learning. This involved collecting several 3D images of piles of hooks. Feedback and feedforward mechanisms then changed the weights of neurons in a custom artificial neural network, resulting in a detection model that could locate the 3D positions and orientations of hooks in a bin. AI-driven software then planned gripper and arm trajectories to successfully pick and untangle the hooks.

Today, the advent of more powerful processing in computers aids in achieving these and other bin picking advancements. What’s more, AI makes bin picking more robust in ways that are not obvious. Bin picking, or any vision-guided robotics, by its nature is not perfect, Glover said.

An assortment of industrial parts that could be bin picked, seen in a 3D point cloud (top) and in color (bottom), generated by a 3D camera. The 3D data that shows the position in space of a surface is essential to bin picking. Courtesy of Zivid.

“Any time the robot is doing something differently every time or there’s something different in the process every time — a different part or position or anything — there’s some variability, and therefore there’s some error rate,” he said.

CapSen manages these situations through error recovery. Suppose that a bin pick fails. An action script in the CapSen software would specify steps that the robot should take. This may include reorienting the part, attempting to pick another part, or another means to complete the pick.

A properly designed recovery script eliminates or minimizes failures and thereby avoids shutting down a production line. The cost, though, is a variable bin picking time, with a successful initial pick attempt taking much less time than one that goes into error recovery. For this reason, designers of the manufacturing line might need to build in process buffers, such as locations to stage waiting products, Glover said.

According to Glover, CapSen uses what he calls programmable AI, machine learning systems with hard limitations. An example would be an arm that is only allowed to move within a specific region or with a certain amount of force. Setting such boundaries ensures that the solutions developed by a neural net do not exceed safe operating conditions or other constraints.

Software innovations also make it possible to use simple off-the-shelf industrial cameras for bin picking, said Miika Satori, CEO of Cambrian Robotics. The company developed its software under a fundamental guiding principle, one that applies to bin picking or other vision-guided tasks that people routinely manage.

“A robot should be able to do the same thing,” Satori said.

Eyes in people are equivalent to a robot having two cameras spaced a known distance apart. Cambrian’s software does the heavy lifting in transforming what the individual cameras capture into something useful, Satori said. The software takes the input from each camera and determines from that data the location of an object in space, with this ability developed by training the system on a wide variety of virtual images of the part or parts of interest.

Bin picking transparent parts, traditionally a difficult problem, using two standard industrial cameras. Courtesy of Cambrian Robotics.

An advantage of this approach over one that uses the active projection of dots or other light is that image acquisition is faster, according to Satori. Active projection may involve sending out a series of line or dot configurations, capturing the data, and then processing it to produce a 3D point cloud. In contrast, Cambrian’s approach uses a single image capture and ambient lighting.

Greater processing power

This technique is practical because computer processing power is much greater than it was in the past. Five years ago, training the classification model that identifies and locates a part might have taken several days or even weeks, Satori said. Today it takes a few hours, making this approach feasible. He said that running the model is very fast, typically <20 ms.

A key requirement for this method is the existence of a large enough training set of images. Because Cambrian’s approach uses ambient lighting, the training set must include images of the part in question under a wide illumination range as well as in many different orientations.

Bin picking of random parts using a 3D camera to generate point clouds to determine where to pick up a part. Courtesy of Zivid.

As with other bin-picking methods, there will be instances in which the pick fails, perhaps because parts cannot be separated or recognized. If the failure rate is low, then the failed parts can be set aside for a person to deal with. A high failure rate may require further refinement of the bin picking system.

Satori said that this approach can also deal with agricultural products or other items for which no CAD description exists. One way to process products with greater variability is through a deformable CAD model. Using this method, a CAD model of a standard potato would be stretched or squeezed to create other reference potato models. Alternatively, engineers could include a wide variety of potato models in a training set, leading to an AI system capable of recognizing potatoes despite differences in size and other variations.

As for future developments, Zivid’s Leonard sees ongoing sensor improvements. For instance, structured light 3D cameras, such as Zivid’s, require objects to be still. Leonard predicted that camera advancements will allow these devices to perform dynamic capture, enabling them to complete 3D imaging of moving objects.

Regarding the software side, Satori said that the jobs a robot can perform are growing, with the performance of bin picking and wire assembly demonstrated using AI. However, in one area there is still room for significant improvement: the time needed to program the system and develop a solution.

A person might need only seconds or minutes of instruction to then pick up parts and put them somewhere. Automating this process, though, takes longer.

“It’s still sometimes many days and maybe weeks to get everything in sync,” Satori said. One avenue of future innovation that he foresees will be to get the programming time down to something approaching what people need.

For his part, FANUC’s Walker said that in the coming years, AI-powered vision could help overcome gripper limitations. For instance, AI-enhanced 2D vision could identify an object’s material, allowing a robot to use suction when handling a relatively flimsy cardboard box and then to switch to a stiff two-finger gripper when dealing with much more rigid chrome tubing.

AI systems are not bulletproof and thus do sometimes make mistakes, he said. A system may aim for 99%, or greater, reliability in identifying objects and achieving bin picking success. Getting close to 100%, though, typically comes at the expense of system cost or throughput, or both. Hence, there is always a balancing act between speed and reliability.

When it comes to designing and building a bin picking solution, Walker said, “You’ve got to find that happy medium.”