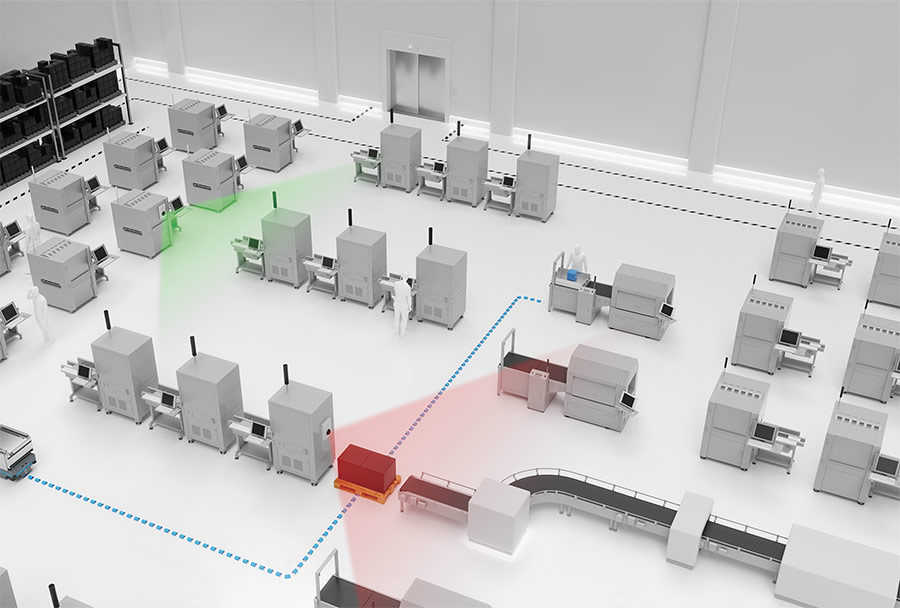

Traditional 2D vision technologies can track robot locations within a facility, whereas 3D vision helps in collision avoidance.

HANK HOGAN, CONTRIBUTING EDITOR

Adding imaging depth to vision systems can improve inspection performance, enhance pick-and-place operations, and help vision-guided robots do a better job of avoiding objects. With falling prices and rising performance, various forms of 3D vision are increasingly popular on the factory floor.

One emerging application is the use of 3D vision for robot navigation. Mobile Industrial Robots A/S, for example, headquartered in Odense, Denmark,

offers mobile robots that create a map of the environment for navigation.

According to Josh Cloer, sales director for the eastern U.S., 3D vision supplements this data by spotting nearby obstructions.

A mobile robot, seen here at the beginning of the dotted blue line at left, can use 3D vision to detect obstacles in its path. Courtesy of Mobile Industrial Robots.

“The primary task for the 3D vision is to find the obstacles, and then we plan a path around the obstacles that we detect,” he said.

The robots also have other sensors, including encoders and gyroscopes that track the robot’s journey. All of this data is fused into an integrated picture of where the robot is in a room, as well as where everything else is.

It would be simpler to go with a single sensor, but that’s not possible for several reasons, according to Cloer. Safety regulations mandate the use of lidar, for instance, but that technology cannot cost-effectively capture every needed detail. At the same time, 3D vision systems can’t hit safety performance standards, and some 3D vision techniques are affected by ambient light.

Thus, having input from multiple sensors can help overcome the deficiencies of the various technologies. “Understanding the space itself in these environments is a tough task,” Cloer said. “We’re adding more of that capability in the deep learning and sensor fusion.”

3D vision is used to inspect bottles in a rapidly moving production line. Courtesy of SICK.

Industrial locations need varied 3D imaging approaches, said Aaron Rothmeyer, market product manager at sensor maker SICK AG of Waldkirch, Germany. The company’s offerings include stereoscopic, structured light, and time-of-flight 3D imaging products.

Rothmeyer said the safety standard for a driverless vehicle such as a mobile robot is designed for a 2D device looking ahead at ground level in the direction of travel. But in an industrial setting, forks extended from parked forklifts, or other hazards located in the air above the plane of detection, may be present.

“That’s where 3D comes in, and additional 2D technologies as well,” Rothmeyer said.

He added that users of industrial driverless vehicles want to maximize uptime and prevent collisions that can damage equipment; consequently, they want the extra protection provided by having multiple sensors. Users see any additional expense incurred as a worthwhile investment, Rothmeyer said.

3D vision improves inspection of printed circuit boards populated with components by enhancing the ability to detect defects. Courtesy of Chromasens.

The various vision technologies offer different strengths. Traditional 2D vision technologies are well suited to figuring out where in a facility a robot is located, while 3D helps prevent collisions. As for going solely with 3D, that would mean dealing with a lot of data. The more cost-effective solution turns out to be a combination of 2D and 3D vision, but Rothmeyer said SICK is getting more requests from industrial customers for 3D solutions.

Inspecting and selecting parts

Some of this interest is regarding using 3D for quality control inspection, picking parts from a bin, and other industrial applications, said Ryan Morris, SICK’s market product manager for 2D/3D vision and auto identification products. Morris noted that a laser triangulation-based 3D vision method is often used for inspection.

This approach and structured light techniques offer higher resolution and so can pick out finer details than is possible with time-of-flight sensing. But time of flight has a longer reach and can cover a larger field of view, making it the best choice for collision avoidance.

Morris said that inspection requirements vary by application. Electronics and the solar market often require high-resolution inspection at a relatively slow speed. Consumer goods applications frequently check item identification or count. Assessing these larger functions must be performed at a very rapid rate, with units moving past a point at up to 2.5 m/s. Bottling applications look for cap tilt and presence/absence with submillimeter resolution at up to 600 bottles per minute.

3D vision provides height or depth information, and this can be added to 2D data to reveal features. Courtesy of Chromasens.

These differing needs must be satisfied, causing trade-offs to be necessary. “You typically will sacrifice speed for resolution or sacrifice resolution for speed,” Morris said.

SICK has lessened the need for trade-offs by adding custom technology on its boards, with some sensors performing rapid on-chip calculations to help increase the speed of the 3D imaging. Another benefit to this local processing is that calculations performed in the 3D device allow preliminary image analysis, which lessens the amount of data that must be moved over the network.

If 3D images must be transferred or saved, Morris recommended that a gigabit network be used. SICK’s products include the capability to store some data on the 3D device itself, he said.

When used, 3D imaging resolution affects the speed of operations and

the amount of data generated, which,

if too high, can burden networks and

storage. So, how low should resolution go?

One guideline comes from Douglas Barker, chief operating officer of

Energid Technologies Corp. The

Bedford, Mass.-based company’s

core product is a motion control software toolkit for robots.

Capturing a part’s location in 3D can help when figuring out what movements a robot must go through during a pick-and-place operation. In Energid’s experience, the resolution of the vision system must be significantly finer than the part being handled.

“We typically use a rough rule of thumb that the part dimensions we’re trying to detect should be about an order of magnitude larger than the resolution of the point cloud generated by the vision system,” Barker said. “If we have 0.3-mm resolution point cloud, the part dimension should be 3 mm or larger.”

Generating a high-resolution 3D point cloud may take several seconds, he added. If the parts are stationary, though, the point cloud may only need to be produced once. Then, when the robot knows where the parts are, it can pick them up and place them where needed, and then it or other systems can carry out other functions, such as a quality-control inspection.

Dealing with data

However, an approach capable of 3D vision inspection need not produce an enormous amount of data or take a long time to perform, according to Klaus Riemer, product manager for Chromasens GmbH. Headquartered in Konstanz, Germany, the company offers 3D products that are based on dual line-scan sensors and a stereoscopic approach. The sensors also provide 2D color images, and software can be used to intelligently maximize this information while minimizing data processing or inspection cycle time arising from 3D vision.

“With our product having 2D and 3D output, the application first can do a 2D analysis [that] outputs ROIs [regions of interest], which can be used to restrict 3D inspection to those ROIs,” Riemer said. “This reduces the need for more processing power — or speeds up the inspection.”

He said 3D data is more complex than the 2D equivalent. This makes inspection a more complex task, which means deep learning and other software techniques may be necessary. More computationally powerful processing hardware may also be required.

And there may be a need to beef up interface and network data rates, said Donal Waide, director of sales at Woburn, Mass.-based BitFlow Inc. The company makes industrial frame grabbers and software for imaging, with a focus on interfacing to cameras that have high data or frame rates.

BitFlow’s experience with 3D vision has been that the interface between the camera and the system can be critical, particularly in industrial settings. Some interfaces and cables are more prone to 3D data loss than others, Waide said. He also pointed out that some interfaces do not provide data on a schedule and so are less deterministic than others. He recommended a CoaXPress (CXP) interface as the best way to handle what can be the very large amounts of data generated by

3D imaging.

As for the future, Waide predicts such imaging will become more commonplace. This will happen due to advancements in key aspects of the technology.

“3D has become more inexpensive and less complex, two reasons it is increasingly being deployed,” he said.

The 3D Vision Picture

3D imaging can be achieved in various ways, and each approach has its own strengths and weaknesses. Some of the more widespread methods are:

Time of flight (TOF). This technique works by measuring the round-trip time of light to a point on an object. Lidar works this way and is often used for imaging at longer distances. Lidar tends to be costly, though, and its beam must sweep around the environment 360° to accomplish 3D imaging, because it only picks up objects along the beam path.

Stereoscopic vision. This method works by integrating images from two sensors, similar to how humans integrate images from their two eyes to achieve 3D perception. With machine vision, two cameras integrate images, and proper illumination is required.

Structured light. With this technology, found in today’s smartphones and other consumer goods, distortions in a projected dot pattern reveal depth information. Structured light offers high resolution but is limited in reach and field of view.

Laser triangulation. With this method, the location of a laser dot on a sensor, combined with knowledge of where the laser is in relation to the sensor, yields distance. Laser triangulation is immune to changes in ambient lighting, but generating a 3D image does require that either the part or the dot move.

|